What is the impact of GDPR on AI?

Article: GDPR, Artificial Intelligence

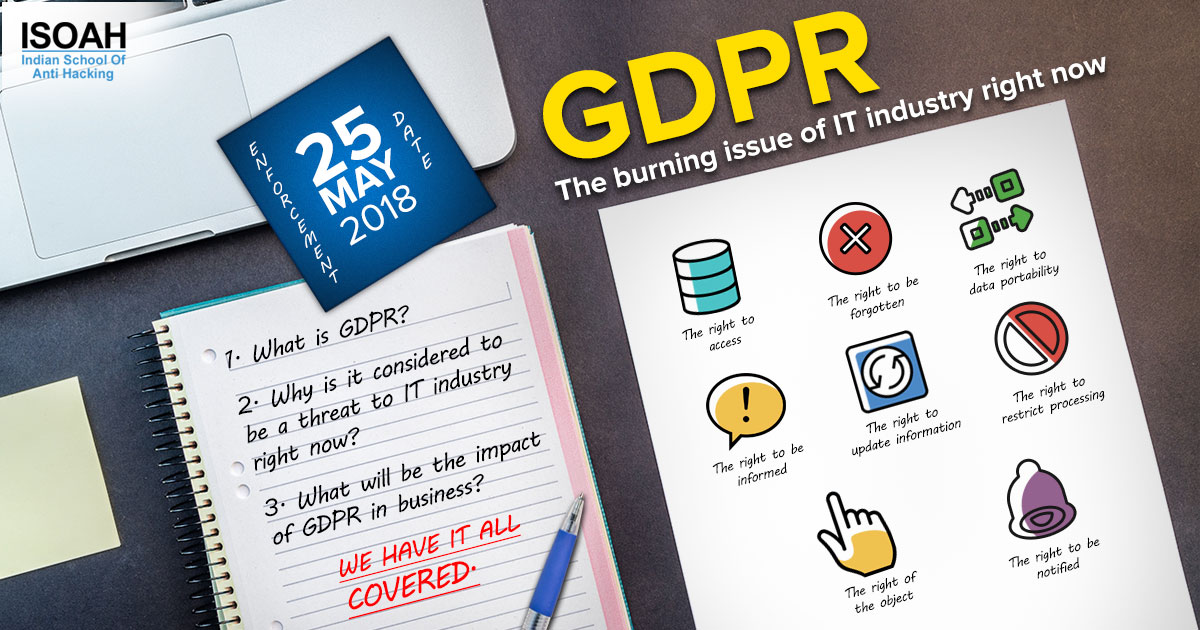

For the last few weeks, are you getting bombarded with emails telling you that they have updated their privacy policy? Well, it is the EU General Data Protection Regulation that has got enforced to provide more power to the customers on their personal data. Every company that processes any record of any person residing in EU, has to comply with the new rules and regulations of GDPR.

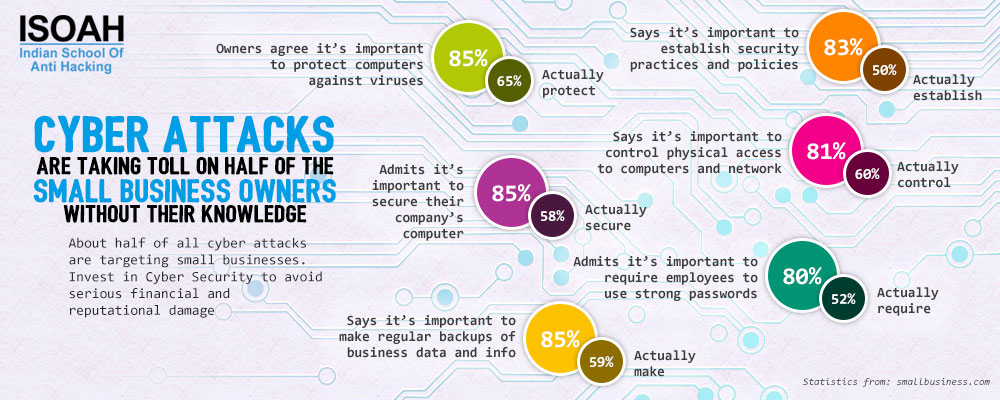

While GDPR is supposed to force enterprises to take greater care of the personal data of their clients, there are implications for the development of emerging technologies too. "Artificial Intelligence", the technology making the most buzz these days is about to hit a major blockage named GDPR. Tech companies are amassing large volumes of user data to hone the artificial intelligence algorithms that power their applications and platforms. It has been easier for companies to evade accountability when their practices have pushed them into legally and ethically gray areas until GDPR came into the scene. Now, GDPR has imposed unprecedented restrictions on the collection and handling of user data in the EU region and slap heavy penalties on companies that fail to comply. Let's see how it is going to impact the rapid pace of development of Artificial Intelligence.

Data ownership and AI:

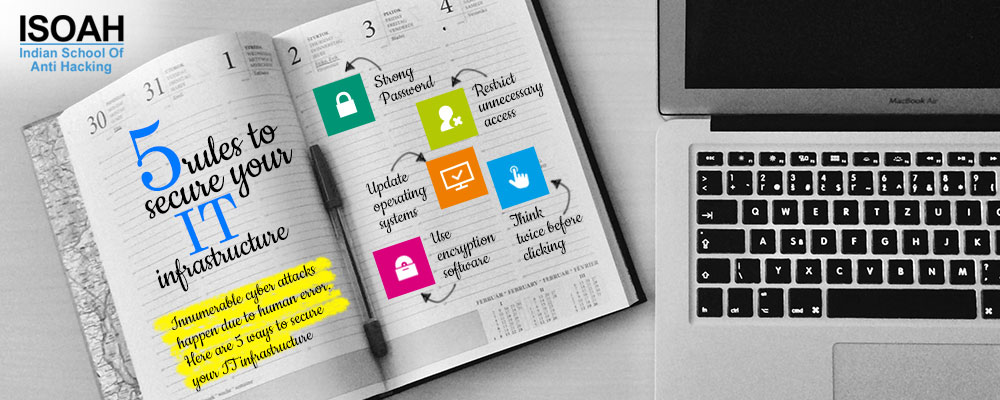

Machine learning – the core pillar of Artificial Intelligence, involves complex algorithms that progressively improve themselves by feasting on data. The more data they consume, the better they get at spotting patterns. The data are being processed and they produce an insight for a human or another machine to take action on. There are a few key areas in the new GDPR regulation that might impact AI most of all, including consent and permission to use data, understanding how the data is being processed and the right to be forgotten. Consent and permission to use data are complicated because, under the GDPR, organizations need to obtain permission from the user to capture data for specific use cases only. Previously, companies were required to receive only a vague consent from users to collect all sorts of data. Under GDPR, companies will have to reveal the full scope of information they collect, protect and prevent unnecessary access. The AI companies need to be more meticulous about the data they collect, process and share.

The right to forget and AI:

Under GDPR, the individual has the right to demand that a company erase all their data from its servers. Now AI companies will face a big issue here as they have a vested interest in consuming as much data as possible to perform tasks such as predicting trends and user behavior. Erasing data can be a daunting task to perform manually when it is scattered across different servers and stored in different structures and unstructured formats. If an organization wants to re-use data for another purpose not outlined in the original consent, they may need to go back and ask for an increased level of permission, this process can prove to be costly, and time-consuming that could impact innovation. GDPR will also raise the cost of human mistakes in handling data and the irony is AI itself can be the solution in this regard.

Right to explain data and AI:

The ‘Right to Explanation' under GDPR states that companies must notify users about how their data will be processed. This basically means that users must know when they're being directly or indirectly subject to AI algorithms and should be able to challenge the decisions those algorithms make and request proof of how the conclusion was derived. Organizations will have to be much more aware of the algorithms and how they operate inside the organization and they are bound to explain to the users, whose data they have collected, what the algorithms are doing, how they would work, and how the result would be used as well. Now, GDPR will hold AI companies to account for the decisions their algorithms make. So, if machine learning algorithms are used to do the processing, it must be designed in a way that enables companies to explain the decisions they make on its behalf. This will be one of the biggest challenges that the AI industry will face.

Outsourcing AI:

GDPR is going to be a nightmare for organizations that make their data available to third parties. The Cambridge Analytica scandal is still fresh in people's mind. The social media giant failed to prevent the data mining firm from collecting and abusing the data of 87 million users. But GDPR will also have implications for companies that outsource their AI functionalities and make their data available to AI providers. Now, the businesses need to seek out AI providers who believe in owning the algorithms and not the data. If personal data is used to make automated decisions about people then it must be able to explain the logic behind the decision-making process.

AI innovation and GDPR:

Article 22 of GDPR prescribes that AI cannot be used as the sole decision maker in choices that have legal or similarly significant effects on users. GDPR requires companies to manually review decisions made by AI algorithms. This, of course, would raise the labor cost. Data are pooled together to train an AI algorithm. The new regulation will not only challenge the current practices and habits that AI companies have adopted but force them to find new ways to innovate maintaining the ethical standards and privacy. If the enforcers of GDPR decide that the company must erase the effect of a unit of data on the AI model in addition to deleting the data, companies using AI must find ways to granularly explain how a model works and fine-tune the model to "forget" that data in question. Leading AI researchers are working to enable model explainability and tunability. The GDPR deletion mandate could accelerate progress in these areas. The only way out is building trust with the consumers instead of sneakily capture customer information. Improved data infrastructure will have enabled early AI applications to demonstrate their value, encouraging customers to voluntarily share their data.